Accuracy is one of the top criteria that senior marketing professionals look for in market research. But what exactly is “accurate” market research, and how do you ensure it’s part of your efforts? Let's dig more deeply into what accurate research truly means, some key mistakes companies make, and how you can avoid them.

What is accurate market research?

For most of us, “accurate market research” would include data that reflects their customers (or target audience) and helps improve the success of new campaigns, products and services. Most claims related to accuracy are associated with surveys among large groups of people. We are lured into comfort by “95% confidence level” or “error range of +/- 3%.”

While these claims provide reassurances that the research is accurate, they don’t actually capture many of the sources of inaccuracy in market research. Arguably, some of the most inaccurate research is actually from large-scale surveys, as opposed to more targeted research.

Larger Sample Sizes Don’t Equal Better Accuracy

Getting the right audience is truly the difference-maker when it comes to accuracy. One of the most frequent questions we get asked as market researchers is, "How many people do I need to speak with to get accurate responses?" The sample size you need is not based on the size of the population (unless your target is very small). The sample size you need has to do with how similar or different the opinions of your target audience are.

If you get a group of similar people together, and they all agree on something, then you can feel with a high degree of confidence that their response is valid. It’s representative of that specific audience. When that group has varying opinions, you need to talk to a larger group to identify differences that are meaningfully different.

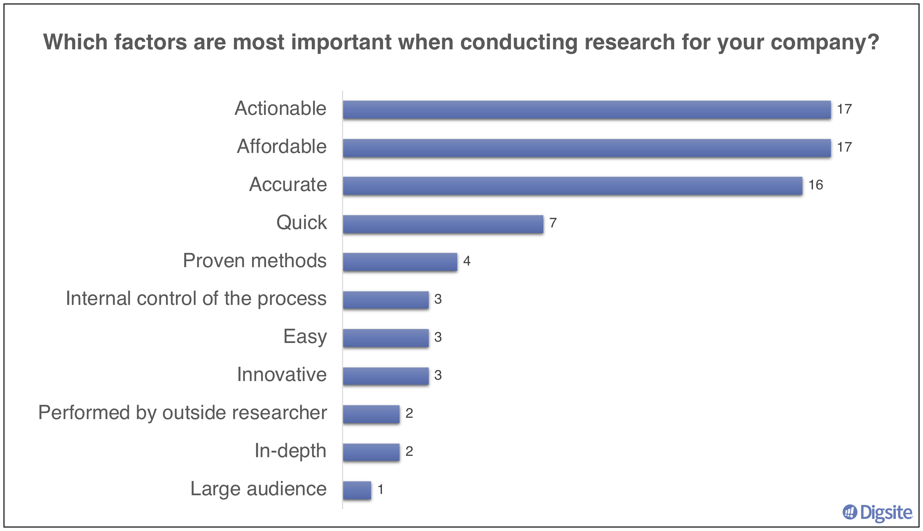

When we conducted a poll among senior market researchers, the three most important attributes for market research are actionable, accurate and affordable. Given nearly everyone agreed on these attributes, we don’t need to talk to more researchers to have confidence that this is important.

The verbatim responses all indicated similar thoughts and feelings, providing even more confidence in our findings.

- "It has to be accurate for it to matter."

- "We use research a lot to help with decision making so findings need to be accurate and actionable."

- "Access to timely data with a solid methodology leads to the best actionable results."

- "I don't want to spend money on data that is not accurate."

- "Research and insights don't matter if they are not actionable or accurate."

- "If it is not accurate there is no point in doing it."

6 Mistakes That Can Impact Marketing Research Accuracy

- Recruiting too narrow or too broad of an audience

Election polling is a common way that people think about market research accuracy or inaccuracy. Thinking back to the 2020 election, many of the online polls had thousands of responses. However, because they had more responses from Republicans or Democrats, the results didn't necessarily reflect the distribution of who voted on election day. Companies often make this same mistake, using a large sample but not accurately reflecting the distribution of people who would be making that purchase decision.

In consumer products, many of us were raised with the idea of talking to a “nationally representative” population. To have accurate research results, this might not be the right distribution. In reality, you benefit the most from talking to the people who are most likely to impact your sales.

For many product categories, some iteration of the 80/20 rule applies. If 80% of sales come from 20% of customers, your research should focus on these more influential customers. For example, a new concept test conducted for a food company had results from a “nationally representative” sample of 300 people. Of that sample, about 100 were people who had bought the product category in the last six months - the influential buyers. Looking at the in-market results, the purchase interest among those 100 people reflected the in-market success of the new product much more accurately than the purchase interest among the other 200 people who rarely went down that aisle in the grocery store.

- Insufficient fraud protection

The foundation of good research consists of qualified and articulate participants. Online qualitative often has better success than its in-person counterpart in driving engagement and honest responses. However, it is important to understand how your provider ensures quality participation.

Keep in mind that the number of low-quality participants and “research farms” have expanded significantly over the past few years. Find out if what efforts your research partners take to filter out “professional survey takers” who complete hundreds of surveys a day and try to create dozens, if not hundreds of accounts within panels to qualify for more studies. Do they track how many other surveys the respondent has completed in the last 24 hours not just in one platform, but across many recruiting platforms?

As the saying goes, “garbage in, garbage out.” Make sure your survey link prevents users from filling out multiple surveys until they qualify. Ask screening questions that make it hard to determine what key screening criteria are and disqualify people who select “fake” answers. Use sample providers that go beyond digital fingerprinting to apply text analytics that checks open-ended answers for context and quality.

- Mistaking attitudes for behaviors

We all know that our attitudes do not actually reflect our behaviors. We may believe in protecting our planet, but throw away recyclable items because there isn’t a recycling bin nearby. Or we may believe in eating healthy, but indulge in fast food during a business trip. Getting accurate research results means recognizing that our situations impact our decisions as much as our attitudes do.

For example, when we spoke to consumers about a new food product, we knew people were overstating their need for a product when they said “This would be great for camping.” Given that the respondents went camping infrequently, we realized they were coming up with a situation when they could purchase the product rather than telling us how it fits in with their everyday needs.

Asking accurate questions can also be tricky if you’re not thinking about questions in the customer’s terms. “You need the mindset and language of the consumer,” said Jennifer Cooper, a market researcher and owner of BuyerSynthesis. “They might just think about your product once a year, but you think about it every day.”

- Underestimating outside influences on behavior

One common research technique we use is to ask participants to complete the sentence “when I ___, I will use ___ instead of ___ because ___.” The idea is to always understand the context of the decision, as well as the alternatives they are choosing from. Rather than simply asking someone what they like or dislike about an idea, start by asking them what they are doing now.

Help them provide answers in the specific context of their most recent experience. Otherwise, they may give you feedback about how other people would use the product, or about a situation that is uncommon. For example, with Digsite’s qual+quant platform, you get to choose how you want to interact with your participants, going beyond closed-ended questions to mark-up ideas, fill-in-the-blanks, engage in a group conversation or provide video responses all designed to get more accurate in-context information, to tell a story and get buy-in.

- Putting people in a situation where they aren’t giving honest answers

Issues with people trying to please the interviewer (what psychologists call social desirability bias), have been well documented, particularly for phone and in-person research. This can be made worse if the person asking the questions introduces their own biases.

You need to be objective in terms of what you’re searching for, and not try to simply validate your own thinking. You have to be open to surprises. It’s important to provide a safe, secure environment where the participant can trust you and what you’re trying to achieve. Participants can tell when you have internally dismissed them, which will not only yield inaccurate results, but taint participants’ views on participating in future research.

Jennifer Cooper of BuyerSynthesis says “Even when the end client makes defensive comments, it’s up to you as the researcher to treat each person you interview with respect, and to realize that even the inaccurate comments they make originate from their experiences and guide their decisions.”

- Overwhelming people with surveys that are too long and have too many questions

Quantitative surveys are running into a number of issues. As Ray Poynter of NewMR talked about in this post, surveys that are too long are a major problem. Poynter notes that anything that takes over 20 minutes is “bound for the insight scrap heap.”

Over-surveying is also an issue. When people are bombarded with surveys, they eventually tune them out and you’re left with the people who really like the product or the ones who had a problem.

There are two problems with this: First, your non-response error will get bigger and bigger as you get responses from only those people who have something extreme to say. The people you are surveying will become inherently different.

What can you do right now to improve the accuracy of your research?

Missing the mark on accurate market research can have a tremendous impact on organizations. For innovation projects, it can lead new product teams to focus on building features that don’t ultimately add value to the customer. For branding projects, it can lead to missing the mark on market awareness or adoption. And for customer experience projects, it can lead to lost opportunities relative to the competition.

First, always start with a clear hypothesis on what you expect to find and how that might be different across your target audience. This will help you hone in on who you really need to talk to, so you don’t define your audience too narrowly or broadly. It will also give you a good start on determining how many people you need to talk to for accurate results.

Second, focus your research on understanding the consumer’s beliefs in the context of what they are doing at all stages. It’s difficult to influence behavior, and focusing on understanding their situation and the context for decision-making allows participants to be more accurate in their responses to new ideas.

Finally, consider agile consumer insights platforms vs. in-person research or surveys. Online qual+quant tools allow you to recruit the right target audience and give you the opportunity to dig deeper and truly understand the “why” behind an answer. Participants are more likely to be honest in their answers when they are responding in the comfort of their own environment without someone they are trying to please. Your team is more likely to act on the results because they can see real people’s responses and ask follow-up questions as needed.

To achieve accurate results, the biggest challenge is to find the right participants for your research project. Recruiting the right people is an area where agile qualitative holds a distinct advantage over traditional surveys. You tend to narrow in on a highly relevant audience and can easily tell by the responses whether someone is truly a relevant contributor. While you may have fewer responses, the ability to ensure that each response is accurate is much more valuable. If finding the right people is the key to achieving accuracy, then it’s up to the industry to continue to foster new ideas and methods to make it happen.

To learn more about agile methods and best practices, check out our new 3-part eBook series: Ready, Aim, Fire: A Guide to Agile Insights for Consumer Product Teams.